Mixing and Looping with NAudio

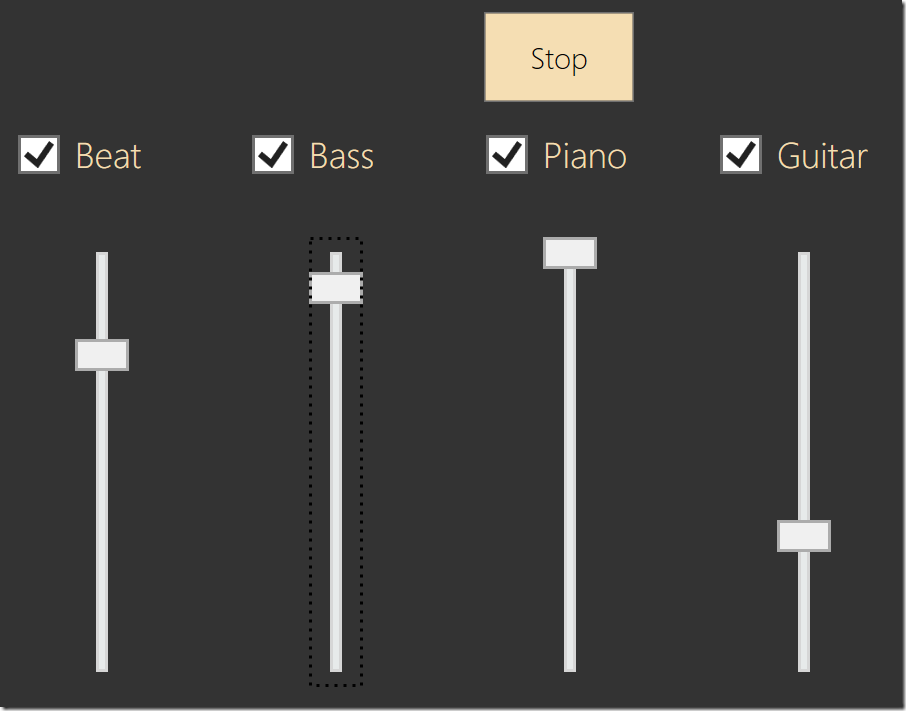

On a recent episode of .NET Rocks (LINK), Carl Franklin mentioned that he had used NAudio to create an application to mix together audio loops, as part of his “Music to Code By” Kickstarter. He had four loops, for drums, bass, and guitar, and the application allows the volumes to be adjusted individually. He made a code sample of his application available for download here.

This is quite simple to set up with NAudio. To perform the looping part, Carl made use of a LoopStream (using a technique I describe here). The key to looping is simply in the Read method, to read from your source, and if you reach the end (your source returns 0 or fewer samples than requested), reposition to the start and keep reading. This means you have a WaveStream that will never end.

Here's the code for a LoopStream that a WaveFileReader can be passed into:

/// <summary>

/// Stream for looping playback

/// </summary>

public class LoopStream : WaveStream

{

WaveStream sourceStream;

/// <summary>

/// Creates a new Loop stream

/// </summary>

/// <param name="sourceStream">The stream to read from. Note: the Read method of this stream should return 0 when it reaches the end

/// or else we will not loop to the start again.</param>

public LoopStream(WaveStream sourceStream)

{

this.sourceStream = sourceStream;

this.EnableLooping = true;

}

/// <summary>

/// Use this to turn looping on or off

/// </summary>

public bool EnableLooping { get; set; }

/// <summary>

/// Return source stream's wave format

/// </summary>

public override WaveFormat WaveFormat

{

get { return sourceStream.WaveFormat; }

}

/// <summary>

/// LoopStream simply returns

/// </summary>

public override long Length

{

get { return sourceStream.Length; }

}

/// <summary>

/// LoopStream simply passes on positioning to source stream

/// </summary>

public override long Position

{

get { return sourceStream.Position; }

set { sourceStream.Position = value; }

}

public override int Read(byte[] buffer, int offset, int count)

{

int totalBytesRead = 0;

while (totalBytesRead < count)

{

int bytesRead = sourceStream.Read(buffer, offset + totalBytesRead, count - totalBytesRead);

if (bytesRead == 0)

{

if (sourceStream.Position == 0 || !EnableLooping)

{

// something wrong with the source stream

break;

}

// loop

sourceStream.Position = 0;

}

totalBytesRead += bytesRead;

}

return totalBytesRead;

}

}

For mixing, the approach Carl took was simply to create four instances of DirectSoundOut and start them playing together. To allow adjusting the volumes of each channel he passed each LoopStream into a WaveChannel32, which converts to 32 bit floating point, and has a Volume property (1.0 is full volume). To ensure that the four parts remained in sync, when you deselect a part, it doesn't actually stop it playing - instead it sets its volume to 0.

This approach to synchronization works surprisingly well, but it is not actually guaranteed to keep the four parts synchronized. Over time, they could drift. So a better approach is to use a single output device, and feed each of the four WaveChannels into a mixer. Here’s an example block diagram showing a modified signal chain with two inputs feeding into a single mixer:

---------- ---------- -----------

| Wave | | Loop | | Wave |

| File |-->| Stream |-->| Channel |---

| Reader | | | | 32 | | ------------

---------- ---------- ----------- --->| Mixing |

| Wave |

---------- ---------- ----------- --->| Provider |

| Wave | | Loop | | Wave | | | 32 |

| File |-->| Stream |-->| Channel |--- ------------

| Reader | | | | 32 |

---------- ---------- -----------

NAudio has a number of options available for mixing. The best is probably MixingSampleProvider, but for Carl's project, it was easier to use MixingWaveProvider32, since he's not making use of the ISampleProvider interface. This allows you to mix together any IWaveProviders that are already in 32 bit floating point format.

MixingWaveProvider32 requires that you specify its inputs up front. So here, we could connect each of our inputs, and then start playing. With this simple change, Carl's mixing application is now guaranteed to not go out of sync. This is the recommended way to mix multiple sounds with NAudio.

Here's the code that sets up the mixer (Carl has a class called WavePlayer encapsulating the WaveFileReader, LoopStream and WaveChannel32, allowing you to access the WaveChannel32 with the Channel property):

foreach (string file in files)

{

Clips.Add(new WavePlayer(file));

}

var mixer = new MixingWaveProvider32(Clips.Select(c => c.Channel));

audioOutput.Init(mixer);

You can download my modified version of Carl's application here.

The only caveat is that mixers require all their inputs to be in the same format. For this application, this isn’t a problem, but if you want to mix together sounds of arbitrary formats, you'd need to convert them all to a common format. This is something I cover in my NAudio Pluralsight course if you’re interested in finding out more about how to do this.

A place for me to share what I'm learning: Azure, .NET, Docker, audio, microservices, and more...

A place for me to share what I'm learning: Azure, .NET, Docker, audio, microservices, and more...

Comments

Is there a way to make the mixed volume not louder?

Aldithe sound produced will have a volume of about twice the sound that was originally in the overlapping section

yes, mixing sounds will result in a louder overall volume. you can always reduce the volume of the inputs when you mix multiple things together

Mark Heath