Rapid Prototyping Web APIs with Azure Functions

One of my favourite things about the new Azure Functions service is how easy it is to quickly prototype application ideas. Without needing to provision or pay for a web server, I can quickly set up a webhook, a message queue processor or a scheduled task.

But what if I’m prototyping a web-page and want a simple REST-like CRUD API? I decided to see if I could build a very simple in-memory database using nodejs and Azure Functions.

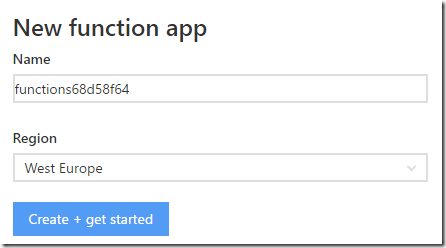

Step 0 – Create a function app

It’s really easy to get started with Azure Functions, but if you’ve not yet used them, you can sign in to functions.azure.com and you’ll be offered the opportunity to open an existing function app or create a new one:

It’s just a matter of choosing which region you want to host it in. By default this will create a function app on the Dynamic pricing tier, which is what you want since you only pay for what you use, and there’s a generous free monthly grant, so for most prototyping purposes it’s likely to be completely free.

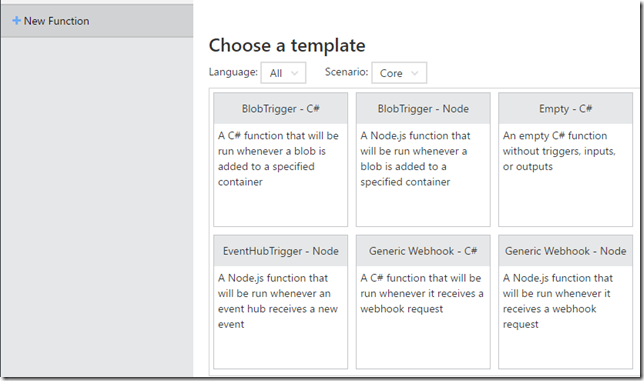

Step 1 – Create a new NodeJS Function

From within the portal, select “New Function”, and choose the “Generic Webhook – Node” option.

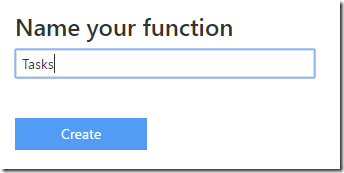

Give your function a name relating to the resource you’ll be managing, as it will be part of the URL:

Step 2 – Enable more HTTP Verbs

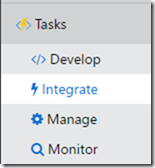

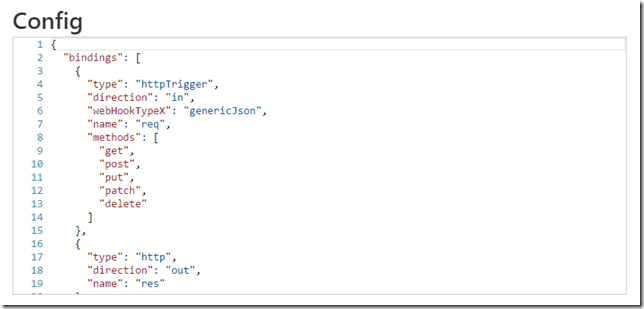

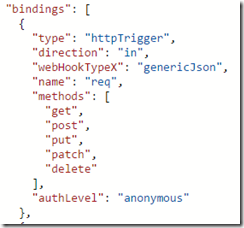

In the portal, under your new function, select the “Integrate” tab, and then choose “Advanced Editor” to let you edit the function.json file directly.

You’ll want to add in a new “methods” property of the “httpTrigger” binding, containing all the verbs you want to support. I’ve added get, post, put, patch and delete

Step 3 – Add the In-Memory CRUD JavaScript File to your Function

I’ve made a simple in-memory database supporting CRUD in node.js. I’m not really much of a JavaScript programmer or a REST expert, so I’m sure there’s a lot of scope for improvement (let me know in the comments), but here’s what I came up with:

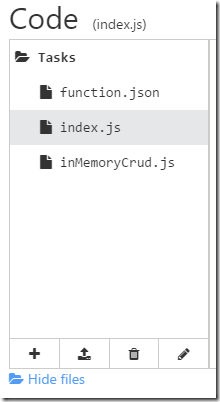

To get this into your function you have a few options. The easiest is in the “Develop” tab to hit “View Files”, and drag and drop my inMemoryCrud.js file straight in. Or you can just create a new file and paste the contents in.

If you look through you’ll see it supports GET of all items or by id, inserting with POST and deleting with DELETE, as well as replacing by id using PUT and even partial updates with PATCH. It optionally lets you specify required fields for POST and PUT, and there’s a seedData method for you to give your API some initial data if needed.

Obviously, since this is an in-memory database there are a few caveats. It will reset every time your function restarts, which will happen whenever you edit the code for your function, but can happen at other times too. Also if there are two servers running your function, they would both have their own in-memory databases, but Azure functions is unlikely to scale up to two instances for your function app unless you are putting it under heavy load.

Step 4 – Update index.js

Our index.js file contains the entry point for the function. All we need to do is import our in memory CRUD JavaScript file, seed it with any starting data we want, and then when our function is called, pass the request off to handleRequest, optionally specifying any required fields.

Here’s an example index.js:

Step 5 – Enabling CORS

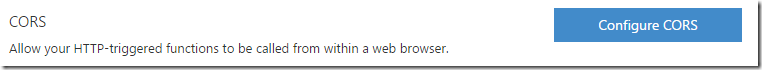

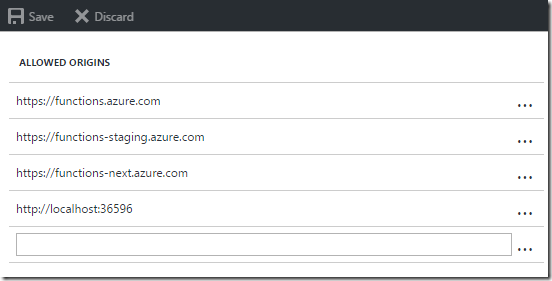

If you’re calling the function from a web-page then you’ll need to enable CORS. Thankfully this is very easy to do, although it is configured at the function app level rather than the function level. In the portal, select function app settings and then choose Configure CORS.

In there, you’ll see that there are some allowed origins by default, but you can easily add your own here, as I’ve done for localhost:

Step 6 – Calling the API

The Azure function portal tells you the URL of your function which is based on your function app name and function name. It looks like this:

https://myfunctionapp.azurewebsites.net/api/Tasks?code=bzxkgm4e13gowmm2zo26jq0k9sz8p18k7j1aa5v2mgf9p3pgb9j57uu1tkzwiylvwa9ohb5u3di

The code is Azure Function’s way of securing the function, but for a prototype you may not want to bother with it. You can turn it off by setting authLevel to anonymous in the function.json file for the httpTrigger object.

The other slight annoyance is that Azure Functions doesn’t support us using nice URLs like https://myfunctionapp.azurewebsites.net/api/Tasks/123 to GET or PUT a specific id. Instead we must supply the id in the query string: https://myfunctionapp.azurewebsites.net/api/Tasks?id=123

Is there a quicker way to set this up?

If that seems like quite a bit of work, remember that all an Azure function is, is a folder with a function.json file and some source files. So you can simply copy and paste the three files from my github gist into as many folders as you want and hey presto you have in-memory web-apis for as many resources as you need – just by copying and renaming folders – e.g. orders, products, users etc. If you’re deploying via git (which you should be), this is very easy to do.

What if I want persistence?

Obviously in-memory is great for simple tests, but at some point you’ll want persistence. There are several options here, including Azure DocumentDb and Table Storage, but this post has got long enough, so I’ll save that for a future blog post.

A place for me to share what I'm learning: Azure, .NET, Docker, audio, microservices, and more...

A place for me to share what I'm learning: Azure, .NET, Docker, audio, microservices, and more...